A Chief Innovation Officer’s Honest Assessment of AI-Assisted Development — Including the Pitfalls

With our R package Tplyr now over 5 years old, continued expansion and maintenance of the package has unveiled the consequences of some ugly design decisions that we made early on. With some of these being fundamental design choices, I estimated it would take me about two weeks of fully dedicated time as one of Tplyr’s core developers to refactor these decisions out of existence. It’s the types of change that is pervasive through the code base – and as such, not the type of update that anybody every really wants to embark on.

With the wide-world of agentic coding tools available to developers, I decided to see how Kiro, AWS’s new agentic AI IDE, would perform on the task. Overall, the planning and implementation took me about 6-8 hours, most of which didn’t require my full attention. After that, another 2-3 hours of cleanup of my own investigation.

Here’s a brief summary of the refactor, with a link to my full experience report.

The Challenge

Tplyr is a large, interconnected R package used in clinical reporting. The issue I wanted to address was architectural: When Tplyr processed data, many functions were evaluated within object environments rather than individual function environments. This pattern existed throughout the codebase.

The change was conceptually straightforward, but fairly complex in practice:

- Widespread, complicated changes across the entire codebase

- Changes would be deeply interconnected and have a lot of potential side effects

- Parity with existing results was nonnegotiable (nothing user-facing could change)

For a new developer unfamiliar with Tplyr, this refactor could easily take a month. They would have to understand the architecture, identify the problem, create a strategic plan, and implement it without losing track of the dependencies. Even for me, it was a sizable effort, making it a good stress test for an AI agent.

Why Kiro?

I’ve been using Claude Code for a while and have seen how effective agentic tools can be if you manage the context correctly. LLMs take input and generate output, so it’s your job (or the tool’s) to keep that context relevant within the window the model can process.

Kiro’s built on top of VS Code, so it’s a familiar interface. Compared to Claude Code, which is generally CLI driven but can integrate with VS Code through plugins, Kiro is build directly into the IDE itself. It offers two different modes: A vibe coding mode where you can chat and build, and a spec mode. Kiro’s Spec mode works with you to build requirements, create a design, and generate a task list for implementation before any code is written. This means the context is already structured when implementation begins.

Watching Kiro Work

The first thing I asked Kiro to do was analyze the codebase and document conventions. From there, Kiro began designing requirements. Here’s an example:

Acceptance Criteria:

- Zero uses of evalq() for executing multi-line code blocks

- Preserve all existing functionality

- No unintended variable bindings in table/layer environments

Kiro wrote thorough documentation and used that to build a task list of about 30 steps.

Instead of sweeping changes, Kiro took an incremental approach. After each modification, it would rerun tests before proceeding.

One interesting moment came when Kiro was refactoring Tplyr’s nested count layers, which are among the most complex parts of the codebase. Rather than attempting to resolve the problem immediately, Kiro identified an error, noted that the culprit function was scheduled for an update later, and deferred the failures temporarily. That kind of prioritization is something even experienced developers (myself included) don’t always do well.

The Results

By the time Kiro completed its work, the entire codebase had been refactored and approximately 98% of the existing tests were passing. The 2% of failed tests were mostly wonky variable inheritance issues and were straightforward for me to fix once I identified it.

Comparing the effort breakdown between doing this manually and using Kiro:

- My estimate (manual approach): 80-100 hours over two dedicated weeks

- Kiro’s time (planning and implementation): 6-8 hours

- My cleanup time: 2-3 hours

The 6-8 hours Kiro spent on the task also didn’t require my constant attention. My dedicated focus was approximately half that time.

The Pitfalls

In full transparency, I hit a few small issues along the way:

Execution interruptions: Kiro would hang when something interactive was happening in the terminal, like a git command that triggered paging. Kiro allows you to create steering rules to control agent behavior. Once I identified this pattern, I created a rule for terminal commands to minimize the possibility of needing manual interaction.

Unit test considerations: Kiro added unit tests, but some of the tests were failing with false positives. This reinforced an old lesson from Tplyr; having good tests is more important than having a lot of tests.

Implications for Clinical Development

No one asked me to write this assessment, test this tool, or provide an endorsement. In my current role, I don’t have as much time to code as I once did, but I have no shortage of ideas. Tools like this give me the freedom to execute on those ideas.

More importantly, they help articulate what I want to accomplish and communicate with my team. Kiro didn’t just refactor code; it documented requirements, created a strategic plan, and executed with a level of thoughtfulness comparable to an experienced developer.

For data science leaders, statisticians, and developers: If a core developer’s two-week refactor can be completed in a handful of hours, we are fundamentally accelerating the pace of innovation in clinical analytics.

The Full Technical Deep Dive

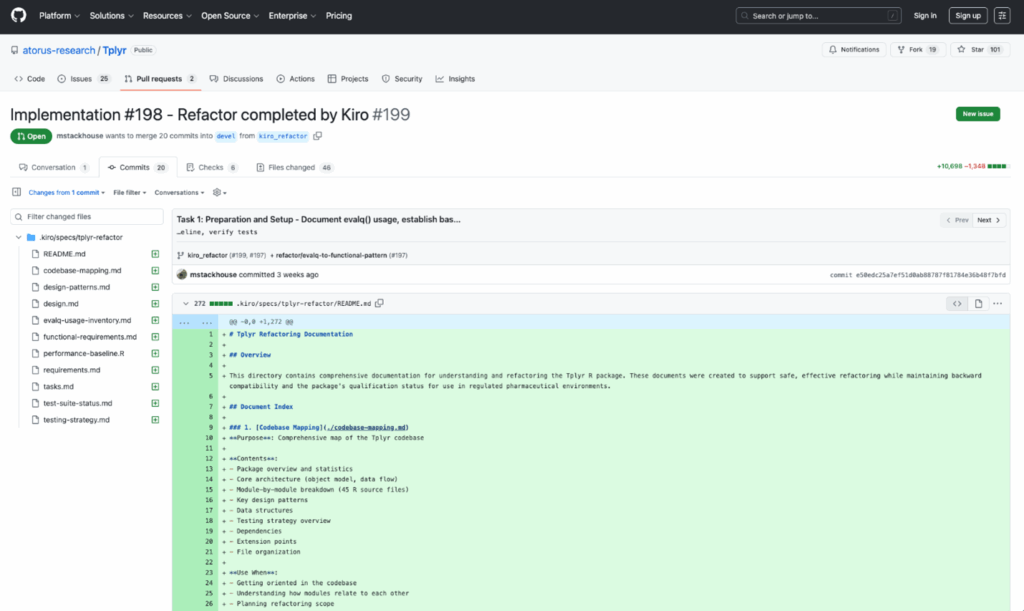

You can find this AI code refactoring test project’s overview, requirements, design documents, and implementation approach on GitHub:

View full report in Pull Request #199

You’ll find Kiro’s specification documents under .kiro/specs/tplyr_refactor.

About the Author

Michael Stackhouse

Chief Innovation Officer, Atorus

Michael Stackhouse leads innovation in clinical data engineering and analytics, specializing in bridging modern data science frameworks with compliant, scalable solutions for the life sciences industry. With extensive expertise in CDISC and open-source technologies, his work spans automation, data science, big data solutions, and clinical analytics within regulated environments. An award-winning industry leader, Michael is a frequent invited speaker, panelist, and published contributor in advancing the future of clinical data science.

References & Resources

- Stackhouse, Michael. 2025. Implementation #198 – Refactor completed by Kiro. Tplyr project (GitHub Pull Request #198). https://github.com/atorus-research/Tplyr/pull/199 https://github.com/atorus-research/Tplyr/pull/198

- Stackhouse, Michael. 2025. Refactor evaluation of code within Tplyr environments using evalq(). Tplyr project (GitHub Pull Request #199). https://github.com/atorus-research/Tplyr/pull/198 https://github.com/atorus-research/Tplyr/pull/199

- Atorus. 2025. Tplyr: .kiro/specs/tplyrrefactor (Commit or branch: kiro_refactor) [Code repository directory]. GitHub. https://github.com/atorus-research/Tplyr/tree/kiro_refactor/.kiro/specs/tplyr-refactor

- Stackhouse, Michael. 2025. Kiro specification documents for Tplyr refactor. Tplyr project, .kiro/specs/tplyr_refactor. https://github.com/atorus-research/Tplyr

- Kiro. 2025. Agentic AI development from prototype to production. Kiro. https://kiro.dev/

- Anthropic. 2025. Claude Code: AI coding agent for terminal & IDE — Build, debug, and ship code with AI assistance. Claude. https://claude.com/product/claude-code

- Swaminathan, Nikhil, and Deepak Singh. 2025. Introducing Kiro. Kiro (blog). https://kiro.dev/blog/introducing-kiro/